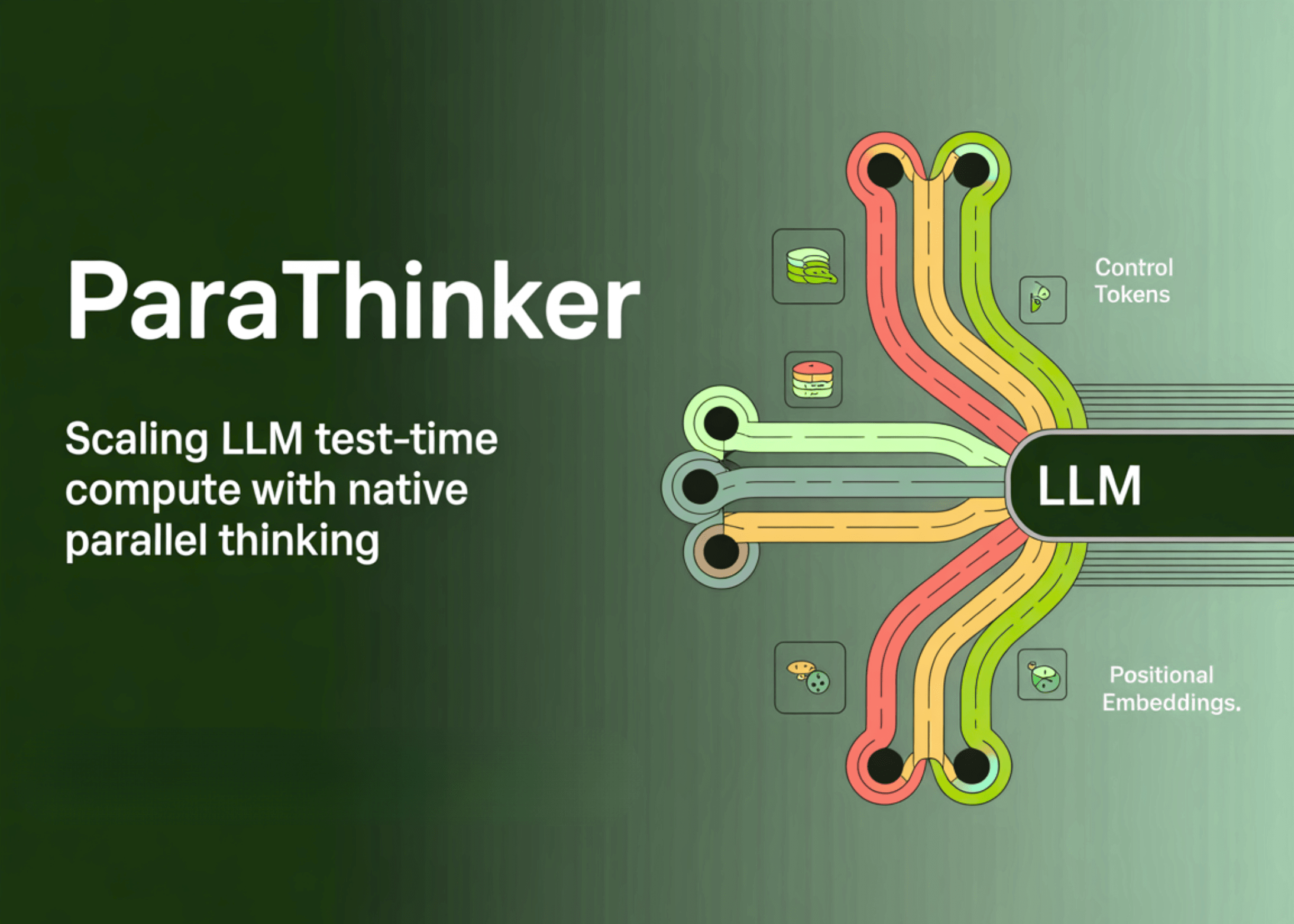

ParaThinker: Scaling LLM Test-Time Compute with Native Parallel Thinking to Overcome Tunnel Vision in Sequential Reasoning

Why Do Sequential LLMs Hit a Bottleneck? Test-time compute scaling in LLMs has traditionally relied on extending single reasoning paths. While this approach improves reasoning for a limited range, performance…

How to Build a Complete Multi-Domain AI Web Agent Using Notte and Gemini

In this tutorial, we demonstrate a complete, advanced implementation of the Notte AI Agent, integrating the Gemini API to power reasoning and automation. By combining Notte’s browser automation capabilities with…

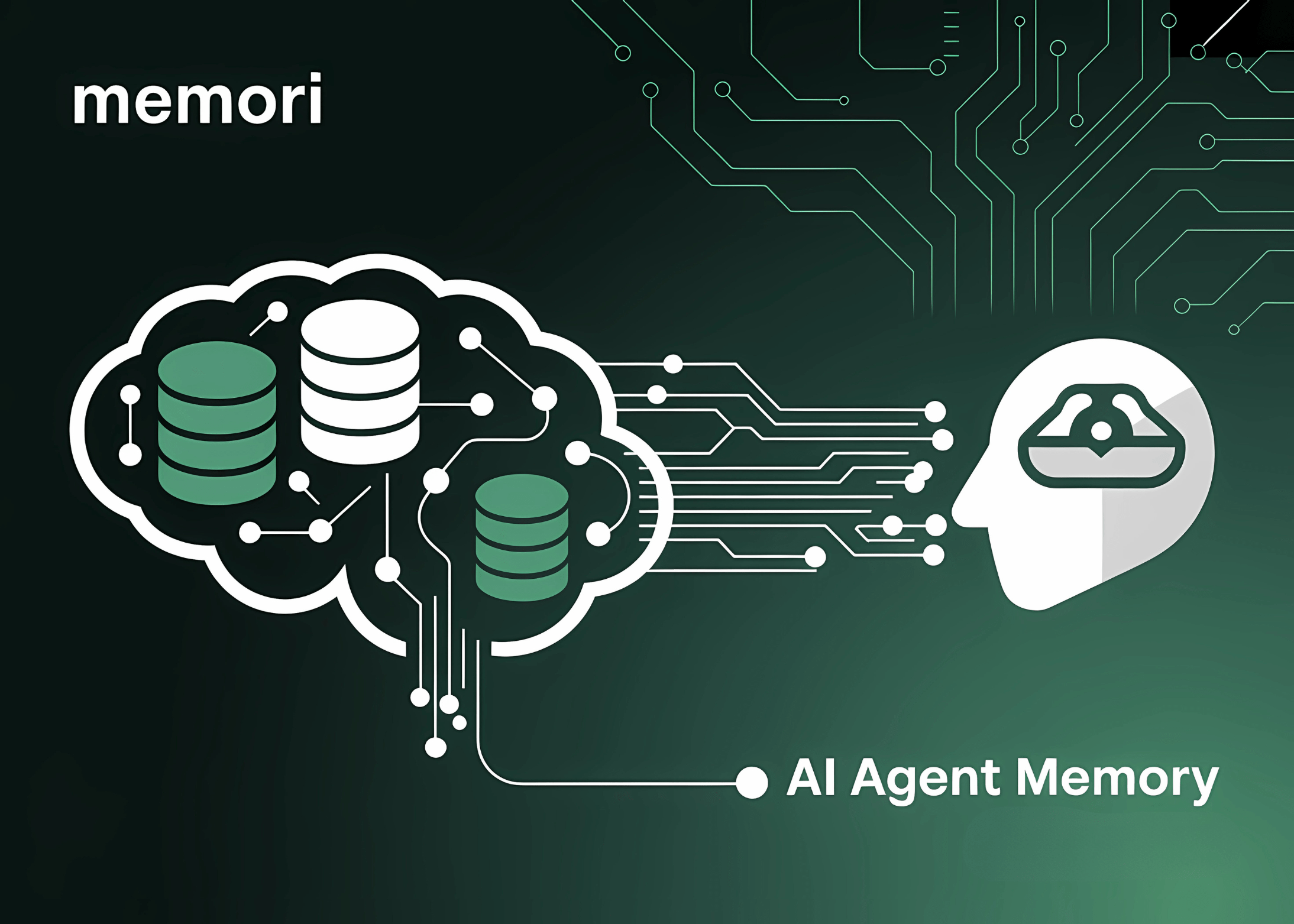

GibsonAI Releases Memori: An Open-Source SQL-Native Memory Engine for AI Agents

When we think about human intelligence, memory is one of the first things that comes to mind. It’s what enables us to learn from our experiences, adapt to new situations,…

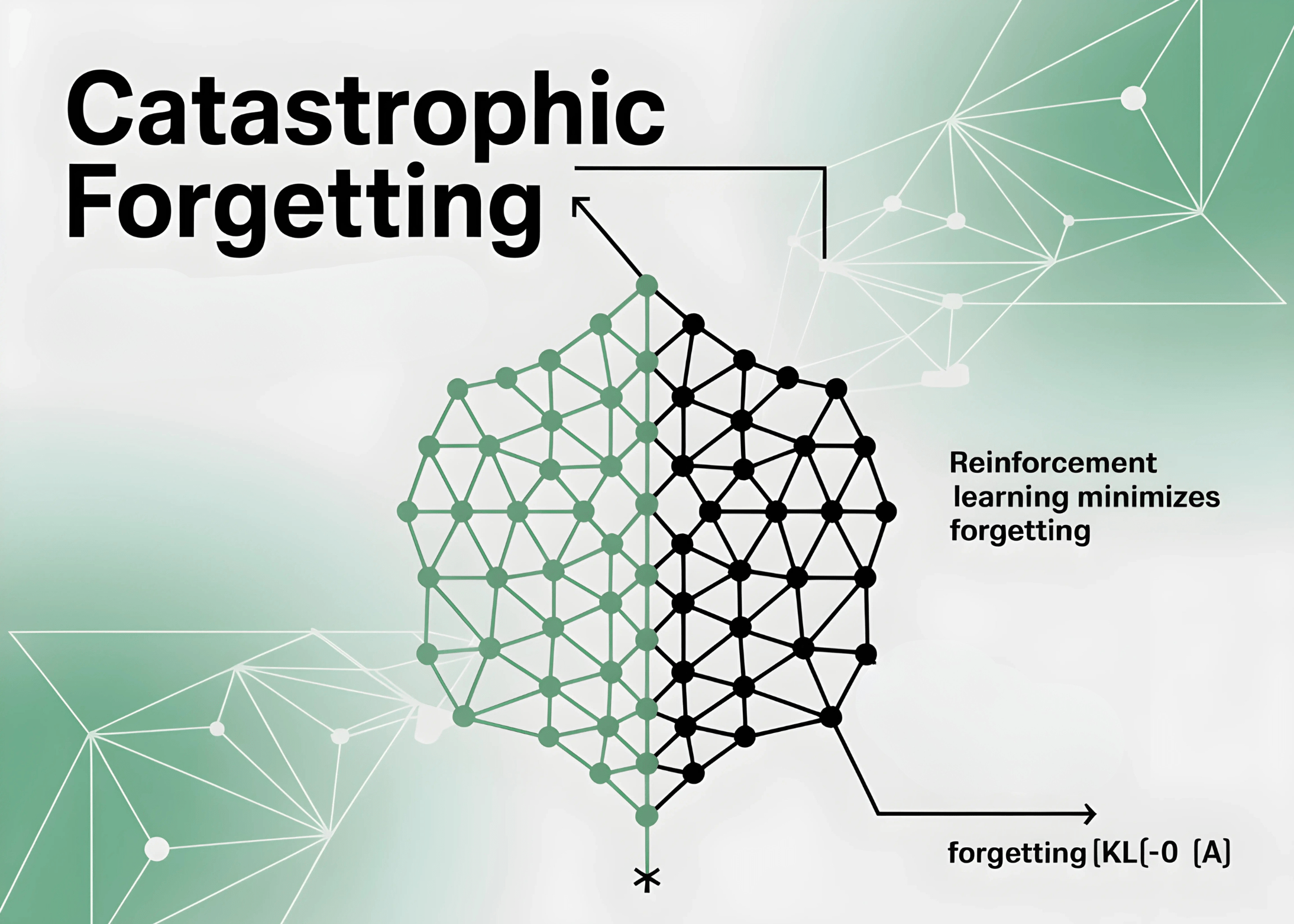

A New MIT Study Shows Reinforcement Learning Minimizes Catastrophic Forgetting Compared to Supervised Fine-Tuning

What is catastrophic forgetting in foundation models? Foundation models excel in diverse domains but are largely static once deployed. Fine-tuning on new tasks often introduces catastrophic forgetting—the loss of previously…

How to Create a Bioinformatics AI Agent Using Biopython for DNA and Protein Analysis

class BioPythonAIAgent: def __init__(self, email=”[email protected]”): self.email = email Entrez.email = email self.sequences = {} self.analysis_results = {} self.alignments = {} self.trees = {} def fetch_sequence_from_ncbi(self, accession_id, db=”nucleotide”, rettype=”fasta”): try: handle…

Meta Superintelligence Labs Introduces REFRAG: Scaling RAG with 16× Longer Contexts and 31× Faster Decoding

A team of researchers from Meta Superintelligence Labs, National University of Singapore and Rice University has unveiled REFRAG (REpresentation For RAG), a decoding framework that rethinks retrieval-augmented generation (RAG) efficiency.…

From Pretraining to Post-Training: Why Language Models Hallucinate and How Evaluation Methods Reinforce the Problem

Large language models (LLMs) very often generate “hallucinations”—confident yet incorrect outputs that appear plausible. Despite improvements in training methods and architectures, hallucinations persist. A new research from OpenAI provides a…

Tilde AI Releases TildeOpen LLM: An Open-Source Large Language Model with Over 30 Billion Parameters and Support Most European Languages

Latvian language-tech firm Tilde has released TildeOpen LLM, an open-source foundational large language model (LLM) purpose-built for European languages, with a sharp focus on under-represented and smaller national and regional…

Implementing DeepSpeed for Scalable Transformers: Advanced Training with Gradient Checkpointing and Parallelism

In this advanced DeepSpeed tutorial, we provide a hands-on walkthrough of cutting-edge optimization techniques for training large language models efficiently. By combining ZeRO optimization, mixed-precision training, gradient accumulation, and advanced…

Meet ARGUS: A Scalable AI Framework for Training Large Recommender Transformers to One Billion Parameters

Yandex has introduced ARGUS (AutoRegressive Generative User Sequential modeling), a large-scale transformer-based framework for recommender systems that scales up to one billion parameters. This breakthrough places Yandex among a small…